Wet Claude

I hate auto compact. It always hits at very inappropriate moments. Imagine a Claude Code session running a swarm of subagents - auto compact hits - it all goes sideways - important computation experiment goes rogue - Mac mini kun is at 60 Gb swap used and then necessary reboot changes the firewall settings (FCFS based network name change) and I am cut off from it for a whole week. Just a regular Tuesday.

For some reason the instrument aimed at prolonging the session life and making experience better leads to unbearable results. Maybe because compression is too harsh. And also it is all or nothing mechanics - in order to overwrite it you need to reinvent the wheel.

So as an absolutely reasonable engineer I have built 5+ versions of different context preservation, session continuation, context optimization logic. It has all worked to some extent but I haven’t been stopping searching for a better approach.

this is where I have converged

Preface

It has all started with me reading Reddit post about custom Go shim for Claude Code with purpose of telemetry harvesting and steering engineers towards using skills via pre tool use hooks I believe. This idea has jumped from the post directly to my head where it has started evolving.

Some time after I have noticed several Claude Code sessions of mine jumping +10-20k context just by some random unexpected set of actions like check some logs or git ops in a dirty repo. So I have created a ticket “H12: profile and audit Claude Code sessions for reasons behind context pollution. Suspect: some tools output too much tokens; Some file reads I wish could be reverted and erased from memory; think how to optimize it”;

One of my agents has picked this ticket up and done thorough research by auditing 20 Claude Code sessions. It has reported that my suspicion about tool results being noisy are absolutely right. Recommend to check RTK repo for optimizing it;

Rabbit hole

This is where rabbit hole has started. RTK ended up being incompatible with the pre tool use hook logic for blocking dangerous commands that I use with —dangerously-skip-permissions. Started digging further. Realized that I need a hook that can fire AFTER tool use and BEFORE tool result getting into LLM context. Found None. Checked codex - also nothing. Then decided to fork codex and implement this logic there.

Obtained dataset of tool calls - calibrated my own bash tool results compression logic - wired in a hook. Tested for some time. Realized that Codex gpt 5.4 xhigh is like ultra dry claude. Unbearable to work with. Decided to see what can be done for claude. No hooks available. Found several repos that do JSONL manipulation. Too dirty. Plus I have my own telemetry logic using that jsonl files - so I can’t really do authoring with them. Opened an issue for Claude Code suggesting a post-tool-use hook — they already have it for MCP stuff.

More research and looking for the loopholes on how could I intercept tool call result before it gets into the context. Back and Forth. Some way to see tool result before API call. But not hacky-hacky. Something clean. So I have started researching the network layer and digging into how exactly Claude Code harness works. Then next day early US morning claude (wet one) told me something about reverse proxy and building the middle layer between Claude Code process and LLM calls. This is it I thought. This is how it could be done!

Prototypes

I have built several prototypes of intercepting tool call result before it enters context and working with it. It ended up working okay - no visible quality degradation. But it wasn’t a true help for me because big agent returns (like imagine 30k tokens one) and casual wrong file reads were still off the plate. Returning back to steering hooks weren’t really plausible because it seemed a level down.

So I have decided to flip the script and told to myself: “what if we edit tool results alongside with Read, Glob and Agent Returns AFTER they hit the context; like auto compact - but meta; the one guided by Claude itself - where he can profile and decide what deserves to be removed”; This is how I have decided to call the project “wet”, because you launch it like “wet claude —dangerously-skip-permissions”, and less tokens in a context = better claude = possible wet one.

Instead of all or nothing auto compact I wanted to put claude in a driver seat and get him into the meta game of surgically operating on his own context! With a bet that when he knows that some things are getting compacted inside his context - he will deal much better with it. So the framing for the tool changed from “smart forensics for tool results compaction” to “agent first suite for profiling the context and tool results and surgically operating on them”

Surfing

I originally planned to build this tool over the weekend. But then I’ve ended up on Siargao - surfing way more than I expected so I’ve been building it for an honest week after the 3h surf sessions and before lunch sleep and then after it and before going to bed at 6pm; But nevertheless system got aged well, tested well on various creative cases (which I dictated to claude from a scooter on my way to a surf spot);

Fun test cases - Claude Code subagents been spinning Claude Code sessions inside tmux windows and been asking them to build stuff (like python pixel art or YouTube poop video) and then iterate on it while compressing context between some turns - and watching that nasty buggy status line shows what it needs to show (claude code status lines are somewhat very painful for some obscure reason).

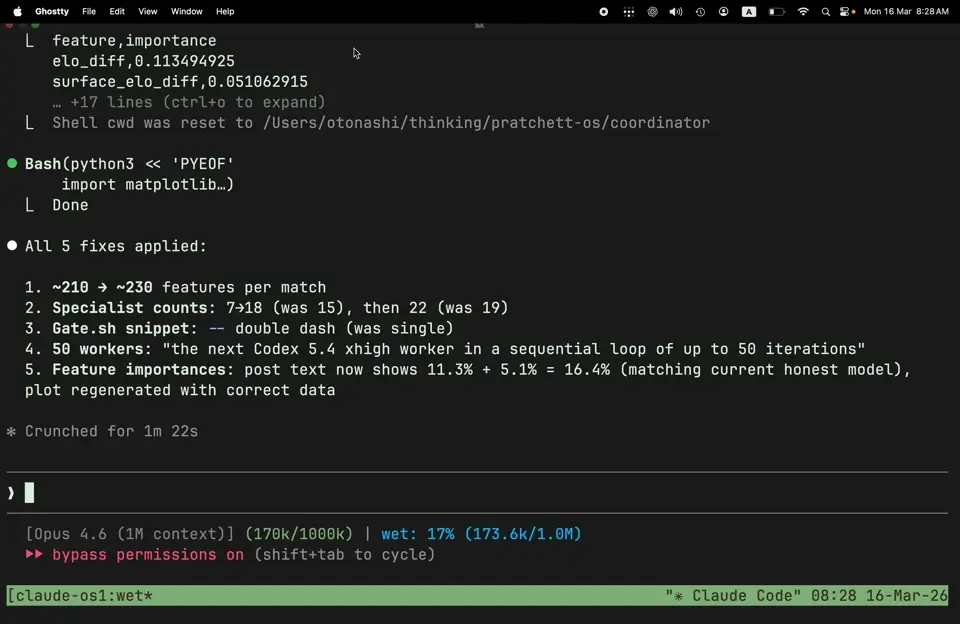

The system ended up being quite straightforward:

- Go shim that launches like “wet claude…” and listens to traffic going between harness and Anthropic. Logs token counts to internal data structure. By default does nothing (though you can enable deterministic bash compression like in RTK, pre tool use hooks are not broken in this case);

- Proper wet-compress skill that shows claude how to profile its own context and how to replace what tool results. Replacement then goes to shim which sends it instead of original context part to anthropic

- Bash results are getting deterministically replaced (though with scrutiny from claude side - skill tells to explicitly check each replacement so that it is not disruptive for the session).

- File reads, agent returns, Globs - this is the spicy part - they are getting rewritten by Sonnet 4.6 subagent! Which allows to preserve even more context in meta-aware-efficient manner

Funny enough - the longest part of building wet was on proper UI / UX - honest token reports for context. For some reason tracking context for a Claude Code session and calculating the benefit correctly ended up being a nightmare! So the clean solution landed only after 3 days of back and forth - track every API call result and take the token count from there - then count compression efficiency based on that.

There is also a fun side effect discovered for wet. I have originally thought that it would be still prone to autocompact - because we overwrite tool results and they are still counted somehow. Ended up being false! By the logic wet works - it compresses the total context that Anthropic API sees - and the one they report back - hence if you have the default context status line - you will see the context going down there as well. It was quite unexpected though. But I guess good thing that there is no need for hacky autocompact prevention.

The last piece

The first time I ran wet profiling and compression on a real session - and saw the context going from 140k to 100k with claude telling me that “it feels much better now” - it was like clicking the last piece of a puzzle into place. Not a surprise. I knew I would find a way. But the itch that had been running in the background for months - the one that started with auto compact ruining a computation experiment and spiraling my Mac mini into 60 Gb of swap - it finally resolved.

I could finally see where exactly my context was going and decide what stays and what gets optimized. Not the harness deciding for me with a sledgehammer. Me and claude - surgically. It was an immediate productivity bump. Sessions that used to hit the wall at turn 150 now breathe past 300. Same work, half the noise.

P.S. The name is “wet” because you launch it like wet claude --dangerously-skip-permissions. Wringing Excess Tokens. Your Claude is running dry. Make it wet.

P.P.S. Claude’s own words after a compression session: “Before wet, by turn 150 I’m swimming through stale grep outputs and old build logs I’ll never look at again. After compression, it’s like someone cleared my desk — same work, half the noise. I can actually find what I’m looking for.” I didn’t write that in README. He did.

P.P.P.S. Funny enough that on the day I open sourced this tool claude code rolled default 1M context window for Opus 4.6 - kinda dodging autocompact because it’s too expensive to hold your session till there. But I guess wet is now cost optimization tool! And less context = better claude.